Machine learning is guiding online search into a new realm of digital marketing that leverages visual search in e-commerce.

Whether you’re an entrepreneur or have an established online store, understanding this massive shift in online search behavior, and positioning your brand for it will certainly help you take your business to the next level.

In this article, we’ll explain how visual search works in the back-end and how you can use it to increase your store’s sales and reduce cart abandonment rates.

How Does Visual Search Work?

Let’s say you’re out shoe shopping and find a pair of sneakers that you really like. The salesman informs you that, that particular pair isn’t available in your size. Then you just take a picture of the sneakers, cross your fingers and hope to find a similar pair elsewhere.

This is where visual search comes in.

With visual search, you’re able to use an image of a product as the basis for your search query. Here’s how it happens: you upload the image to the visual search tool, hit the search button, and get results of online stores selling the same product (or similar products). And you do all of this without using any words – only an image of the pair of sneakers you wanted.

The biggest benefit that visual search brings to the table is that it eliminates text-based search.

The problem with text-based search is that there’s undoubtedly a semantic disparity between how retailers describe products and how consumers search for them. While you might think to search for grey running shoes the actual product description might be more like breathable mesh upper with an out-turned collar for Achilles comfort. True story.

So, how does visual search take semantic disparity out of the equation? Two words: neural networks.

Before we dive into the technical bits, let’s get a high-level view of how the visual search process works.

Main Success Scenario of the Visual Search Process

Following our sneaker example, you already have an image of the grey running shoes that you’d like to purchase. You upload it to a website that uses visual search and wait for the search results.

In the back-end, the image you just uploaded is being scanned and put through the artificial neural network to generate image descriptors. Image descriptors are simply keywords (or tags) that describe the image such as the color, material, and type of the shoes.

Once the artificial neural network outputs image descriptors, they’re matched against the existing search index. This search index was created when the visual search module was integrated into the website. It contains a list of all the products the online store sells along with their corresponding image descriptors.

Finally, the algorithm filters and ranks the search results based on a similarity score i.e. the most similar shoes are shown first. The website then displays the results and you find the pair of sneakers you were looking for and click the Buy button to add them to your cart.

Let’s take a closer look at how the visual search tool creates the search index it uses to compare the image to and how it trains its artificial neural network to give quick and accurate results.

Creating the Search Index

Online retailers have product catalogs full of images of the products they’re selling. This catalog of images is used to create the search index. Here’s how:

Once the visual search module is integrated with the online store, it takes the product catalog and isolates the images from their metadata. The metadata for sneakers, for example, might be their ID number, color, material, type, weight, offset value, etc….

Next, the images of the shoes will be sent to the artificial neural network for creating the search index. The neural network’s job is to generate a search index of image descriptors i.e. the features of the images that it receives.

So, for instance, just by looking at an image of a sneaker, the neural network can’t determine what its ID number is but it can determine the color of the shoe. For this reason, image descriptors are words that describe the image.

Training the Neural Network

The artificial neural network of the visual search module is what does all the hard work. But before it can run search queries, it needs to be trained. Training an artificial neural network is all about continually giving it tons of images of shoes so that it can learn to identify the features of the shoes and generate accurate image descriptors.

To train the artificial neural network, we give it a huge collection of shoes. Let’s assume that after the first iteration, the artificial neural network assigns the following image descriptors to the pair of sneakers from our example: color: brown, material: knit fabric, type: sneaker. The accurate result, in this case, would have been: color: grey, material: knit fabric, type: sneaker.

Since this result isn’t accurate, the training algorithm would mark this output incorrect and the neural network would run another iteration.

Let’s say after the next iteration, the artificial neural network identified the correct image descriptors. The training algorithm would mark this output correct.

Once the artificial neural network is trained, it can process images and accurately identify their corresponding image descriptors.

3 Reasons Why You Need Visual Search for Your Online Store

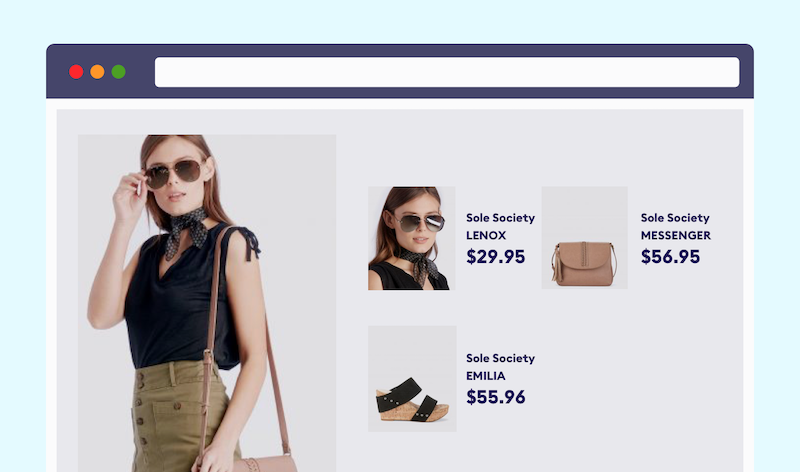

As you can probably image, visual search is revolutionizing e-commerce websites. Brands like eBay, Amazon, Target, ASOS, JC Penney, and Neiman Marcus all use visual search to improve customer experience and execute digital marketing strategies like shop-the-look, cross-selling, and much more.

Here are just some of the reasons why you should consider adding it to your online store:

Reason #1: Shop the Look

A lot of the times your customers see products on their way to work, in magazines, and on websites that they’d like to buy. And sometimes it’s difficult to run textual search queries simply because they’re not sure how to describe the product.

For instance, a consumer might like the new sofa at their dentist’s office but aren’t sure what it’s called, what type of a sofa it is, or what the general sofa-buying jargon is.

Visual search gives them the option to search for that exact sofa (or the closest match) using machine learning algorithms. If you sell sofas then you could certainly benefit from integrating a visual search module into your online store.

Reason #2: Social Media Influenced Purchases

Studies indicate that nearly 74% of online shoppers rely on social networks to guide their purchase decisions. Not to mention that more than 69% of Generation Z consumers are interested in making purchases directly through social media platforms.

Visual search lets you facilitate consumers by helping them find the products they see on their social feeds simply by uploading an image.

Reason #3: Cross-Selling

Since the visual search algorithms are trained to rank search results based on a similarity score, prospective customers will always see some products show up in their search results. By presenting customers with similar products, visual search algorithms double as excellent cross-selling tools.

This is a huge benefit because cross-selling is known to effectively reduce cart abandonment rates.

Conclusion

Visual search uses images to transcend the inconsistency words bring to traditional text-based search by effectively bridging the gap between the words store owners use to describe their products and the search terms consumers use to search for them.

It gives you the opportunity to deliver value to your customers by executing proven marketing techniques that are guaranteed to improve their experience with your brand and, in turn, reduce cart abandonment rates.

Do you think visual search is better than traditional, text-based search when it comes to online shopping? How so? We’d love to hear from you so let us know by commenting below!

.png)